Open source · Free

Claude Code Plugin for Supaflow

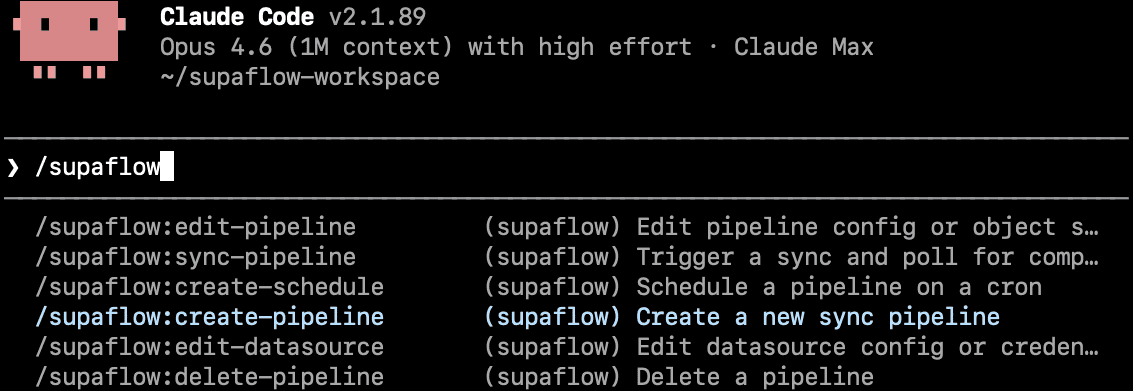

Create datasources, build pipelines, run syncs, and manage schedules from chat. Let your AI agent handle the repetitive pipeline work so your team can focus on making decisions from the data instead of wrangling it.

Generic AI agents can sketch a pipeline in seconds — but sketches don't survive real sources, schema drift, or 2am incidents. This plugin puts Claude on top of the real Supaflow CLI, so the pipeline it builds is a pipeline that actually runs in production.

What You Can Do With It

The plugin is meant for practical day-to-day pipeline work. It helps Claude stay useful when you need to create, inspect, run, or schedule real Supaflow resources.

Create datasources

Connect a source or destination from Claude Code using the same Supaflow CLI workflow you would run manually.

Build pipelines

Ask Claude to set up a new pipeline, choose objects, and confirm the final configuration before anything is created.

Run and monitor syncs

Start a sync, check job status, and inspect failures without bouncing between docs, terminal history, and dashboards.

Schedule recurring loads

Add or update a schedule from chat once the pipeline is working and you are ready to automate it.

Run known commands, not invented ones

Every Claude action routes through an approved Supaflow command. No invented flags. No guessed field names. No one-off shell scripts.

Fork the plugin

The project is open source, so you can inspect how it works or reuse the same pattern for your own CLI-based workflows.

Why this plugin exists

Generic AI agents are good at producing a plausible pipeline. They are much worse at producing one that survives real-world edge cases. This plugin stops Claude from inventing pipelines from scratch and routes it through real Supaflow workflows, so the result is faster to build and far more reliable to operate.

Supaflow handles authentication, execution, retries, and state. Claude drives; the platform operates.

What a real session looks like

Excerpts from a real Claude Code session (March 2026) — building a Postgres to Snowflake pipeline end to end. The plugin is invoked conversationally; real commands run in the terminal; real output flows back to Claude.

Create a datasource

session excerptClaude recovers from a failed connection and the plugin auto-encrypts credentials before they land on disk.

Create a pipeline

session excerptThe plugin asks before acting. Claude scopes with structured options, respects the narrower answer, and filters the config before running the create command.

Execute a pipeline

session excerptPlugin-first command syntax (pipelines sync), not shell-guess. Clean handoff into monitoring.

Check job status

session excerptClaude polls the job, narrates state transitions in plain English (queued → picked → running → completed), and confirms success with real metrics.

Typical Workflows

These are the kinds of tasks where the plugin is most useful: enough structure to be safe, but still conversational enough to move faster than typing everything by hand.

Set up a new warehouse sync

Ask Claude to connect a source and destination, build the pipeline, and walk you through the confirmation steps.

Reconnect a broken datasource

Use the plugin to inspect the existing setup, update credentials or settings, and retry without hunting through old terminal commands.

Check why a job failed

Ask Claude to check recent runs, inspect job status, and narrow down whether the issue is configuration, schema drift, or a source-side problem.

Schedule a pipeline after validation

Once the initial sync is healthy, have Claude add a recurring schedule instead of manually composing another CLI command.

Why It Works Better Than Free-Form AI CLI Usage

Claude helps with reasoning, Supaflow handles execution

The plugin is built for teams who want conversational help during setup and operations, but do not want the model inventing the operational layer.

Real commands are safer than generated shell snippets

Deterministic command routing, explicit confirmation gates, and setup validation reduce the common failure modes in raw AI-CLI usage: guessed flags, wrong command routing, and skipped prerequisites.

Learn Supaflow while using it

Each plugin command maps to a real Supaflow workflow. By the end of your first session you know how datasources, pipelines, syncs, and schedules actually connect — not just how to prompt Claude for them.

Get Started

Add the plugin to Claude Code and start a session. The plugin checks for CLI installation, authentication, and workspace selection automatically on first run.

1. Clone the plugin

2. Add it to Claude Code

3. Start Claude Code

The plugin loads automatically. If the Supaflow CLI is not installed, authenticated, or pointed at the right workspace, the plugin walks you through setup before you start making changes. Try a command like /create-pipeline or ask Claude to build a pipeline from one system to another.

View CLI docs for how to create an API key and authenticate from the CLI.

Frequently Asked Questions

A few practical questions that usually come up before teams start using the plugin in real workflow conversations.